Posit news#

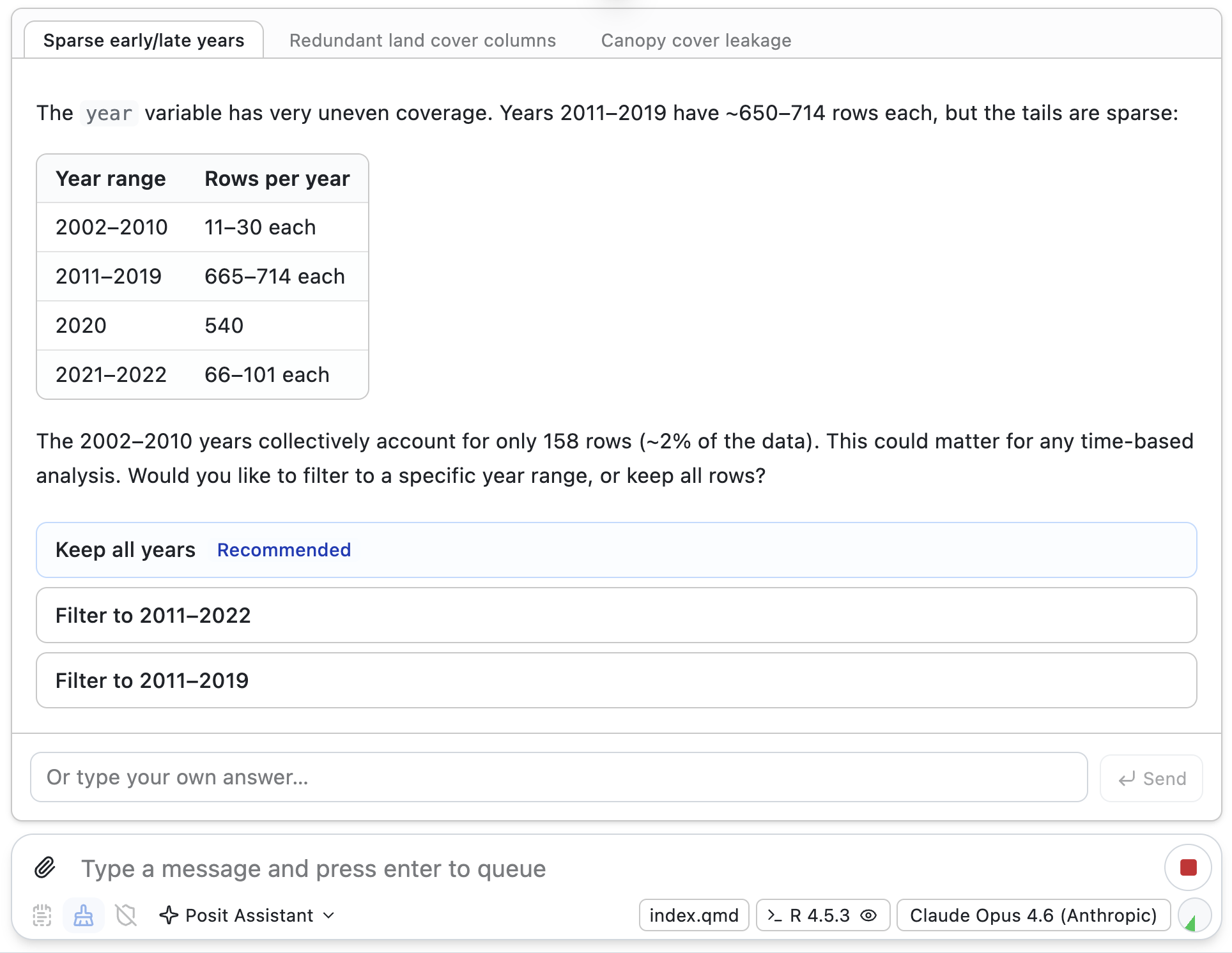

The latest release of Posit Assistant includes a Data Cleaning Mode. When Posit Assistant enters the mode, it spends time identifying and fixing data quality issues and preparing your data for analysis. When certain decisions (e.g., how to recode a variable) require user decisions, it surfaces those decisions in a specialized interface. When you and Posit Assistant are done making decisions about the cleaning process, all the cleaning code is written to a script.

Broadly, we’ve seen that many coding agents seem to have a superficial regard for data quality, focusing only on errors thrown by analysis code. This is one of several features we’re iterating on to tailor the agent experience more closely to the real work of data science.

The initial release of the tabpfn package recently made it to CRAN . TabPFN is a pre-trained neural network for tabular data. In a typical predictive modeling workflow, you train a model on existing data (the training data), and then apply the resulting model to new, unseen data. TabPFN, in contrast, is a neural network pre-trained on a vast array of synthetic datasets. When using TabPFN, the model learns from the analyst’s training data in-context, in a similar way that an LLM can ’learn’ over the course of a conversation, allowing it to predict on new data without an explicit training step. The tabpfn package provides R tidymodels bindings to the pre-trained model.

Terms#

TabPFN and LLMs rely on in-context learning to provide more helpful responses.

Many recent model releases have announced increased context windows of 1 million tokens.1 The context window is the maximum length of the ‘conversation history’. So, if you’ve had 10 messages back and forth with an LLM, and each message was composed of 100 tokens, the context length would be 1,000, well under the context window for most modern LLMs.

LLMs learn in a few different stages. The most recognizable stage is probably pre-training, in which the model gains general knowledge and capabilities by learning from massive collections of text. Another important stage of learning is in-context learning, which happens over the course of a conversation. For example, if you paste a document into an LLM chat and then start asking questions about it, the document has been learned in-context.

TabPFN follows this same learning setup as modern LLMs. The TabPFN team pre-trains the neural network and then allows users to download the resulting weights. Then, the model learns from the analysts’ training data in-context.

Learn more#

- OpenAI released GPT 5.5 , an incremental improvement over GPT 5.4. Google Gemini released Deep Research Max , an incremental improvement over its Deep Research tool.

- Yihui Xie wrote up a thoughtful reflection on his experience with AI-assisted coding .

- LLM use for open-source contributions can be controversial. Zig has instituted a ban on LLM contributions . You can read their rationale here .

-

A token is, roughly, a word. ↩︎