Pandas - History and Future - Talk Python to Me Ep.462

This episode dives into some of the most important data science libraries from the Python space with one of its pioneers: Wes McKinney. He's the creator or co-creator of pandas, Apache Arrow, and Ibis projects and an entrepreneur in this space. ▬▬▬▬ About the podcast ▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬ This video is the uncut, live recording of the Talk Python To Me podcast ( https://talkpython.fm ). We cover Python-focused topics every week and publish the edited and polished version in audio form. Subscribe in your podcast player of choice (100% free) at https://talkpython.fm/subscribe. ▬▬▬▬ Guests ▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬ Wes' Website: https://wesmckinney.com/ ▬▬▬▬ Links and resources from the show ▬▬▬▬▬▬▬▬▬▬▬▬ Pandas: https://pandas.pydata.org/ Apache Arrow: https://arrow.apache.org/ Ibis: https://ibis-project.org/ Python for Data Analysis - Groupby Summary: https://wesmckinney.com/book/data-aggregation.html#groupby-summary Polars: https://pola.rs/ Dask: https://www.dask.org/ Sqlglot: https://sqlglot.com/sqlglot.html Pandoc: https://pandoc.org/ Quarto: https://quarto.org/ Evidence framework: https://evidence.dev/ pyscript: https://pyscript.net/ duckdb: https://duckdb.org/ Jupyterlite: https://jupyter.org/try-jupyter/lab/ Djangonauts: https://djangonaut.space/ Listen this episode on Talk Python: https://talkpython.fm/episodes/show/462/pandas-and-beyond-with-wes-mckinney Episode transcripts: https://talkpython.fm/episodes/transcript/462/pandas-and-beyond-with-wes-mckinney ▬▬▬▬ Dive deeper ▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬ Listen to the Talk Python To Me podcast at https://talkpython.fm Over 250 hours of Python courses at https://training.talkpython.fm/courses Follow us on on Mastodon. Michael: https://fosstodon.org/@mkennedy & Talk Python https://fosstodon.org/@talkpython

image: thumbnail.jpg

Transcript#

This transcript was generated automatically and may contain errors.

Hey Wes, welcome to Talk Python to Me. Thanks for having me. You know, honestly, I feel like it's been a long time coming, having you on the show. You've had such a big impact in the Python space, especially the data science side of that space. It's high time to have you on the show, so welcome. Good to have you.

Yeah, it's great to be here. I've been heads down a lot the last N years. I actually haven't been, because I think a lot of my work has been more like data infrastructure and working at even a lower level than Python. So I haven't been engaging as much directly with the Python community. But it's been great to kind of get back more involved and start catching up on all the things that people have been building.

So being at Posit gives me the ability to have more exposure to what's going on in people that are using Python in the real world. Yeah, there's a ton of stuff going on at Posit that's super interesting, and we'll talk about some of that. You know, sometimes it's just really fun to build and work with people building things.

Wes McKinney's background

Well, before we dive into Pandas and all the things that you've been working on after that, let's just hear a quick bit about yourself for folks who don't know you. So, yeah, my name is Wes McKinney. I grew up in Akron, Ohio, mostly, and I got involved, started getting involved in Python development around 2007, 2008. I was working in quant finance at the time. I started building a personal data analysis toolkit that turned into the Pandas project, and then open-sourced that in 2009.

I started getting involved in the Python community, and I spent several years writing my book, Python for Data Analysis, and then working with the broader scientific Python data science community to help enable Python to become a mainstream programming language for doing data analysis and data science. And in the meantime, I've become an entrepreneur. I've started some companies and have been working to, you know, innovate and improve the computing infrastructure that powers data science tools and libraries like Pandas. So that's led to some other projects like Apache Arrow and Ibis and some other things.

And, yeah, in recent years, I've worked on a startup, Voltron Data, which is still, you know, very much going strong and has a big team and is off to the races. And I've had a long relationship with Posit, formerly RStudio, and they were my home for doing Arrow development from 2018 to 2020. They helped me incubate the startup that became Voltron Data. And so I've gone back to work full-time there as a software architect to help them with their Python strategy to make sort of their data science platform a delight to use for the Python user base.

About Posit

I'm pretty impressed with what they're doing. I don't realize the connection between Voltron and Posit, but I have had Joe Chung on the show before to talk about Shiny for Python. And I've seen him demo a few really interesting things, how it integrates into notebooks these days, some of the stuff that you all are doing. Maybe give people a quick elevator pitch on that while we're on that subject.

Yeah, so Posit started out 2009 as RStudio, and so it didn't start out intending to be a company. JJ Allaire and Joe Chung built a new IDE, Integrated Development Environment, for R because what was available at the time wasn't great. They made that into, I think, probably one of the best data science IDEs that's ever been built. It's really an amazing piece of tech.

So it started becoming a company with customers and revenue in the 2013 time frame, and they've built a whole suite of tools to support enterprise data science teams to make open-source data science work in the real world. But the company itself, it's a certified B corporation, has no plans to go public or IPO. It is dedicated to the mission of open-source software for data science and technical communication, and basically building itself to be a 100-year company that has a revenue-generating enterprise product side and an open-source side so that the open-source feeds the enterprise part of the business. The enterprise part of the business generates revenue to support the open-source development, and the goal is to be able to sustainably support the mission of open-source data science for hopefully the rest of our lives.

The goal is to be able to sustainably support the mission of open-source data science for hopefully the rest of our lives.

It's an amazing company. It's been one of the most successful companies that dedicates a large fraction of its engineering time to open-source software development. I'm very impressed with the company and JJ Allaire, its founder. I'm excited to be helping it grow and become a sustainable long-term fixture in the ecosystem.

Many people know JJ Allaire created ColdFusion, which is the original dynamic web development framework in the 1990s. He and his brother Jeremy and some others built AllaireCorp to commercialize ColdFusion. They built a successful software business that was acquired by Macromedia, which was eventually acquired by Adobe. He did go public as AllaireCorp during the dot-com bubble, and JJ went on to found a couple of other successful startups.

I think he found himself in his late 30s 15 years ago, or around the age I am now, having been very successful as an entrepreneur, no need to make money, and looking for a mission to spend the rest of his career on. Identifying data science and statistical computing as open-source, in particular making open-source for data science work, was the mission that he aligned with and something that he had been interested in earlier in his career, but he had gotten busy with other things. I think it's really refreshing to work with people who are really mission-focused and focused on making impact in the world, creating great software, empowering people, increasing accessibility, and making most of it available for free on the Internet.

What is Pandas?

Speaking of history, let's jump in. There's a possibility that people out there listening don't know what Pandas is. Maybe for that crew, we could introduce what Pandas is.

Absolutely. It's a data manipulation and analysis toolkit for Python. It's a Python library that you install that enables you to read data files, so read many different types of data files off of disk or off of remote storage or read data out of a database or some other remote data storage system. This is tabular data, so it's structured data like with columns. You can think of it like a spreadsheet or some other tabular data set.

Then it provides you with this DataFrame object, which is Pandas.DataFrame, that is the main tabular data object. It has a ton of methods for accessing, slicing, grabbing subsets of the data, applying functions on it that do filtering and subsetting and selection as well as more analytical operations like things that you might do with a database system or SQL, so joins and lookups as well as analytical functions like summary statistics, grouping by some key and producing summary statistics.

It's basically a Swiss army knife for doing data manipulation, data cleaning, and supporting the data analysis workflow. But it doesn't actually include very much as far as actual statistics or models or if you're doing something with LLMs or linear regression or some type of machine learning, you have to use another library, but Pandas is the on-ramp for all of the data into your environment in Python.

So when people are building some kind of application that touches data in Python, Pandas is often the initial on-ramp for how data gets into Python where you clean up the data, you regularize it, you get it ready for analysis, and then you feed the clean data into the downstream statistical library or data analysis library that you're using. That whole data wrangling side of things, right? Yeah, that's right.

History of Pandas and NumPy

So Python had arrays like matrices and what we call tensors now, multidimensional arrays going back all the way to 1995, which is pretty early history for Python. The Python programming language has only been around since 1990 or 1991, if my memory serves. But what became NumPy in 2005-2006 started out as numeric in 1995, and it provided numerical computing, multidimensional arrays, matrices, the kind of stuff that you might do in MATLAB, but it was mainly focused on numerical computing and not with the type of business data sets that you find in database systems which contain a lot of strings or dates or non-numeric data.

And so my initial interest was I found Python to be a really productive programming language. I really liked writing code in it, writing simple scripts, like doing random things for my job. And you had this numerical computing library, NumPy, which enabled you to work with large numeric arrays and large data sets with a single data type. But working with this more tabular type data, stuff that you would do in Excel or stuff that you do in a database, it wasn't very easy to do that with NumPy, or it wasn't really designed for that. And so that's what led to building this higher-level library that deals with these tabular data sets in the Pandas library.

One thing I find interesting about Pandas is it's almost its own programming environment these days in the sense that traditional Python, we do a lot of loops. We do a lot of attribute dereferencing, function calling. And a lot of what happens in Pandas is more functional. It's more applied to us. It's almost like set operations, right? And a lot of vector operations and so on.

Yeah, and that was behaviors inherited from NumPy. So NumPy is very array-oriented, vector-oriented. So rather than write a for loop, you would write an array expression which would operate on whole batches of data in a single function call, which is a lot faster because you can drop down into C code and get good performance that way.

Learning Pandas and the book

One of the challenges, I think, is that some of these operations are not super obvious that they exist or that they're discoverable, right? How do you recommend people, like, kind of discover a little bigger breadth of what they can do?

I mean, there's great books written about Pandas. So there's my book, Python for Data Analysis. I think Matt Harrison has written an excellent book, Effective Pandas. The Pandas documentation, I think, provides really nitty-gritty detail about how all the different things work. But, you know, when I was writing this book, Python for Data Analysis, my goal was to provide a primer, like a tutorial, in how to solve data problems with Pandas.

And it is now freely, as you're showing there on the screen, it is freely available on the internet. So JJ Allaire helped me port the book to use Quarto, which is a new technical publishing system for writing books and blogs and a website, you know, quarto.org. And there it is. And, yeah, so that's how I was able to publish my book on the internet, as, you know, essentially you can use Quarto to write books using notebooks, which is cool.

My book was written a long time ago in O'Reilly's DocXML, so not particularly fun to edit. But, yeah, because Quarto is built on Pandoc, which is a sort of markup language transpilation system. So you can use Pandoc to convert from one, you know, to convert documents from one format to another. And so that's the kind of the root framework that Quarto is built on for, you know, generating, starting with one document format and generating many different types of output formats.

That's cool. I didn't realize your book was available just to read on the internet. Yeah, yeah. So in the third edition, I was able to negotiate with O'Reilly and make an amendment to my very old book contract from 2011 to let me release the book for free on my website. So, yeah, it's just available there at westmckinney.com slash book.

I find that, like, a lot of people really like the print book. And so I think that having the online book just available, like whenever you are somewhere and you want to look something up, is great. Print books are hard to search. Yeah, that's true.

I thought that releasing the book for free online would affect sales, but people just really like having paper books, it seems, even in 2024.

Quarto and Pandoc

This quarter thing looks super interesting. If you look at Pandoc, if people haven't looked at this before, the conversion matrix, I don't know how you would… How would you describe this, Wes? Busy? Complete? What is this? This is crazy. It's very busy, yeah.

Well, as history, like, back story about Quarto, so it helps to keep in mind that JJ created ColdFusion, which was this, you know, essentially publishing system, early publishing system for the internet, similar to CGI and PHP and other dynamic, you know, web publishing systems. And so early on at RStudio, they created RMarkdown, which is basically extensions to Markdown that allow you to have code cells written in R, and then eventually they added support for some other languages where it's kind of like a Jupyter Notebook in the sense that you could have some Markdown and some code and some plots and output, and you would run the RMarkdown renderer and it would, you know, generate all the output and insert it into the document.

And so you could use that to write blogs and websites and everything. But RMarkdown was written in R, and so that limited, in a sense, like it made it harder to install because you would have to install R to use it. And also people, you know, it had an association with R

And so I think that it's not data science, but it's something that is an important part of the data science workflow, which is how do you present your, make your analysis and your work available for consumption in different forms. And so having this system that can, you know, publish outputs in many different places is super valuable.

Pandas growth and community

Let's go back to Pandas for a minute. Ravid says, Wes, your work has changed my life. It's very, very nice. I'm happy to hear it.

When you first started working on this and you first put it out, did you foresee a world where this was so popular and so important?

I mean, it was always the aspiration. There was the aspiration there of making Python this mainstream language for statistical computing and data analysis. I didn't – it didn't occur to me that it would become this popular or that it would become one of the main tools that people use for working with data in a business setting.

And so the fact that Pandas caught on and became as popular as it is, I think it's a combination of timing and there was a developer relations aspect that there was content available, and I wrote my book, and that made it easier for people to learn how to use the project. But also we had a serendipitous open-source developer community that came together that led the project to grow and expand really rapidly in the early 2010s.

And I definitely spent a lot of work recruiting people to work on the project and encouraging others to work on it because sometimes people create open-source projects and then it's hard for others to get involved and get a seat at the table, so to speak. But I was very keen to bring on others and to give them responsibility and ultimately hand over the reins to the project to others. And I've spoken a lot about that over the years, how important that is for open-source project creators to make room for others in growing the project so that they can become owners of it as well.

I'm embarrassed to say that I don't have a comprehensive view of all of the different community outreach channels that the Pandas project has done to help grow new contributors. So one of the core team members, Mark Garcia, has done an amazing job organizing documentation sprints and other contributor sourcing events, essentially creating very friendly accessible events where people who are interested in getting involved in Pandas can meet each other and then assist each other in making their first pull request.

And it could be something as simple as making a small improvement to the Pandas documentation because it's such a large project. The documentation is always something that could be made better, either adding more examples or documenting things that aren't documented or just making the documentation better. And so it's something that for new contributors is more accessible than working on the internals of one of the algorithms or something.

I mean, I think it's definitely a big thing that helped is allowing people to get paid to work on Pandas or to be able to contribute to Pandas as part of their job description, as maybe part of their job is maintaining Pandas. So Anaconda was one of the earliest companies who had engineers on staff, like Brock Mendel, Tom Augsberger, Jeff Reback, who part of their job was maintaining and developing Pandas. And that was huge because prior to that, the project was purely based on volunteers.

But I haven't been involved day-to-day in Pandas since 2013. So that's getting on. That's a lot of years. You know, I still talk to the Pandas contributors. We had a Pandas meetup, core developer meetup here in Nashville pre-COVID. I think it was in 2019 maybe. So I'm still in active contact with the Pandas developers, but it's been a different team of people leading the project. It's taken on a life of its own, which is amazing. That's exactly, as a project creator, that's exactly what you want.

But if you look at a lot of the most intensive community development has happened since I moved on to work on other projects. So now the project has, I don't know the exact count, but it's had thousands of contributors. So to have thousands of different unique individuals contributing to an open source project, it's a big deal. It says, you know, 3,200 contributors, but that's maybe not even the full story. And how GitHub counts contributors, I would say probably the true number is closer to 4,000.

Wow. Yeah, but I think that's a testament to the core team and all the outreach that they've done and making the project accessible and easy to contribute to. Because if you go and try to make a pull request to a project, there's many different ways that you can fail. So either the project is technically, there's issues with the build system or the developer tooling, and so you struggle with the developer tooling. And so if you aren't working on it every day and every night, you can't make heads or tails of how the developer tools work.

They've created a very welcoming environment, and I think the contribution numbers speak for themselves.

Maybe the last thing before we move on to the other stuff you're working on, but the other interesting GitHub statistic here is the used by 1.6 million projects. I don't know if I've ever seen it used by that high. There's probably some that are higher, but not many.

I think it's reached a point where it's an essential and assumed part of many people's toolkit. The first thing that they write at the top of a file that they're working on is import pandas as PD or import NumPy as PD.

I think one of the reasons why pandas has gotten so popular is that it is beneficial to the community, to the Python community, to have fewer solutions. Kind of the zen of Python, there should be one and preferably only one obvious way to do things. If there were 10 different pandas-like projects, that creates skill portability problems. It's just easier if everyone says, oh, pandas is the thing that we use. Change jobs, and you can take all your skills, like how to use pandas with you.

I think that's also one of the reasons why Python has become so successful in the business world is because you can teach somebody, even without a lot of programming experience, how to use Python, how to use pandas, and become productive doing basic work very quickly.

My belief, and I gave a talk at Web Summit in Dublin in 2000, gosh, maybe 2017, I have to look exactly. But basically, it was the data scientist shortage, and my thesis was always, we should make it easier to be a data scientist, or lower the bar for what sort of skills you have to master before you can do productive work in a business setting.

WebAssembly and the browser

Yeah, so I think the Shiny for Python, Streamlet, Dash, like these different interactive data application publishing frameworks, you can go from a few lines of pandas code, loading some data and doing some analysis and visualization to pushing that as an interactive website without having to know how to use any web development frameworks or Node.js or anything like that. And so to be able to get up and running and build a working interactive web application that's powered by Python, yeah, it's a game changer in terms of shortening end-to-end development life cycles.

Yeah, so I'm definitely very excited about it, been following WebAssembly in general, and so I guess some people listening will know about WebAssembly, but basically it's a portable machine code that can be compiled and executed within your browser in a sandbox environment, so it protects against security issues and prevents the person who wrote the WebAssembly code from doing something malicious on your machine, which is very important.

But yeah, I think it's enabled us to run the whole scientific Python stack, including Jupyter and NumPy and Pandas totally in the browser without having a client and server and needing to run a container someplace in the cloud. And so I think in terms of creating application deployment, so like being able to deploy an interactive data application, like with Shiny, for example, without needing to have a server, that's actually pretty amazing.

And so I think that simplifies and opens up new use cases, like new application architectures and makes things a lot easier. Because setting up and running a server creates brittleness, like it has cost. And so if the browser is doubling as your server process, I think that's really cool.

You also have other projects like DuckDB, which is a high-performance embeddable analytic SQL engine. And so now with DuckDB compiled to Wasm, you can get a high-performance database running in your browser. And so you can get low-latency interactive queries and interactive dashboards. And so WebAssembly has opened up this whole kind of new world of possibilities, and so it's transformative, I think.

WebAssembly has opened up this whole kind of new world of possibilities, and so it's transformative, I think.

In the R community, they have WebR, which is similar to PyScript and PyOxide in some ways, like compiling the whole R stack to WebAssembly. There was just an article I saw on Hacker News where they worked on figuring out how to trick LLVM into compiling Fortran code, like legacy Fortran code, to WebAssembly because when you're talking about all of this scientific computing stack, you need the linear algebra and all of the 40 years of Fortran code that have been built to support scientific applications. You need all that to compile too and run in the browser.

Apache Arrow

Yeah, so around the mid-2010s, 2015, I started working at Cloudera, which is a company that was one of the pioneers in the big data ecosystem, and I had spent several years working on, five, six years working on Pandas, and so I had gone through the experience of building Pandas from top to bottom, and it was this full stack system that had its own mini query engine, all of its own algorithms and data structures and all the stuff that we had to build from scratch.

And I started thinking about, what if it was possible to build some of the underlying computing technology like data readers, like file readers, all the algorithms that power the core components of Pandas, like group operations, aggregations, filtering, selection, all those things. What if it were possible to have a general-purpose library that isn't specific to Python, but is really, really fast, really efficient, and has a large community building it so that you could take that code with you and use it to build many different types of libraries, not just data frame libraries, but also database engines and stream processing engines and all kinds of things.

And one of the problems we realized we needed to solve, this was like a group of other open-source developers and me, was that we needed to create a way to represent data that was not tied to a specific programming language and that could be used for a very efficient interchange between components, and the idea is that you would have this immutable, like this kind of constant data structure, which is like it's the same in every programming language, and then you can use that as the basis for writing all of your algorithms.

So we started with building the Arrow format and standardizing it, and then we built a whole ecosystem of components, like library components and different programming languages for building applications that use the Arrow format, so that includes not only tools for building and interacting with the data, but also file readers, so you can read CSV files and JSON data and Parquet files, read data out of database systems. Wherever the data comes from, we want to have an efficient way to get it into the Arrow format.

And then we moved on to building data processing engines that are native to the Arrow format, so that Arrow goes in, the data's processed, Arrow goes out. So DuckDB, for example, supports Arrow as a preferred input format and DuckDB is more or less Arrow-like in its internals.

And so if you were building Pandas now, you could build a Pandas-like library based on the Arrow components in much less time, and it would be fast and efficient and interoperable with the whole ecosystem of other projects that use Arrow.

And it turns out that, as is true with many open-source software problems, that many of these problems are, the social problems are harder than the technical problems. And so if you can solve the kind of people coordination and consensus problems, solving the technical issues is much easier by comparison.

Modern hardware and performance

Yeah, so in AeroLand, when we're talking about analytic efficiency, it mainly has to do with the underlying, how a modern CPU works or how a GPU works. And so when the data is arranged in column-oriented format, that enables the data to be moved efficiently through the CPU cache pipelines, so the data is made available efficiently to the CPU cores.

And so we spent a lot of energy in Aero making decisions, firstly, to enable very cache-efficient, like CPU cache or GPU cache-efficient analytics on the data. The other thing is that modern, and this is true with GPUs, which have a different parallelism model or a different kind of multicore parallelism model than CPUs, but in CPUs, they've focused on adding what are called single instruction multiple data, intrinsic, like built-in operations in the processor where now you can process up to 512 bytes of data in a single CPU instruction.

Ibis and the multi-engine data stack

I think one of the more interesting areas in recent years has been new DataFrame libraries and DataFrame APIs that transpile or compile to different, execute on different backends. And so around the time that I was helping start Arrow, I created this project called Ibis, which is basically a portable DataFrame API that knows how to generate SQL queries and compile to Pandas and Polars and different DataFrame backends.

And the goal is to provide a really productive DataFrame API that gives you portability across different execution backends with the goal of enabling what we call the multi-engine data stack. So you aren't stuck with using one particular system because all of the code that you've written is specialized to that system.

So maybe you could work with, you know, DuckDB on your laptop or Pandas or Polars with Ibis on your laptop. But if you have… If you need to run that workload someplace else, maybe with, you know, ClickHouse or BigQuery, or maybe it's a large big data workload that's too big to fit on your laptop and you need to use Spark SQL or something, that you can just ask Ibis, say, hey, I want to do the same thing on this larger dataset over here. And it has all the logic to generate the correct, you know, query representation and run that workload for you. So it's super useful.

Richie Fink started the Polars project, which is kind of a reimagining of Pandas data frames written in Rust and exposed in Python. And Polars, of course, is built on Apache Arrow at its core. So, you know, building an Arrow-native data frame library in Rust and, you know, all the benefits that come with, you know, building Python extensions in Rust, you know, you avoid the GIL and you can manage the multithreading in a system's language, all that fun stuff.

And so I think the mantra with Polars was we don't want to support the eager execution by default that Pandas provides. We want to be able to build expressions so that we can do query optimization and take inefficient code and under the hood rewrite it to, you know, be more efficient, which is, you know, what you can do with a query optimizer.

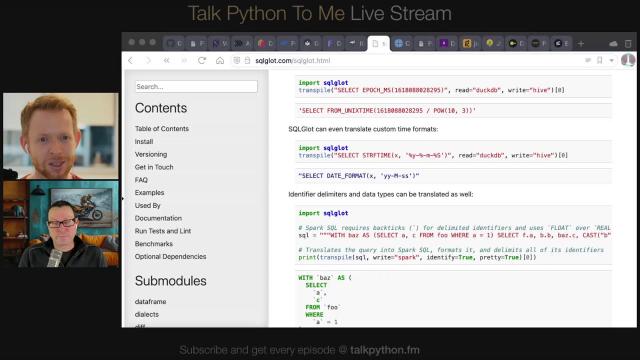

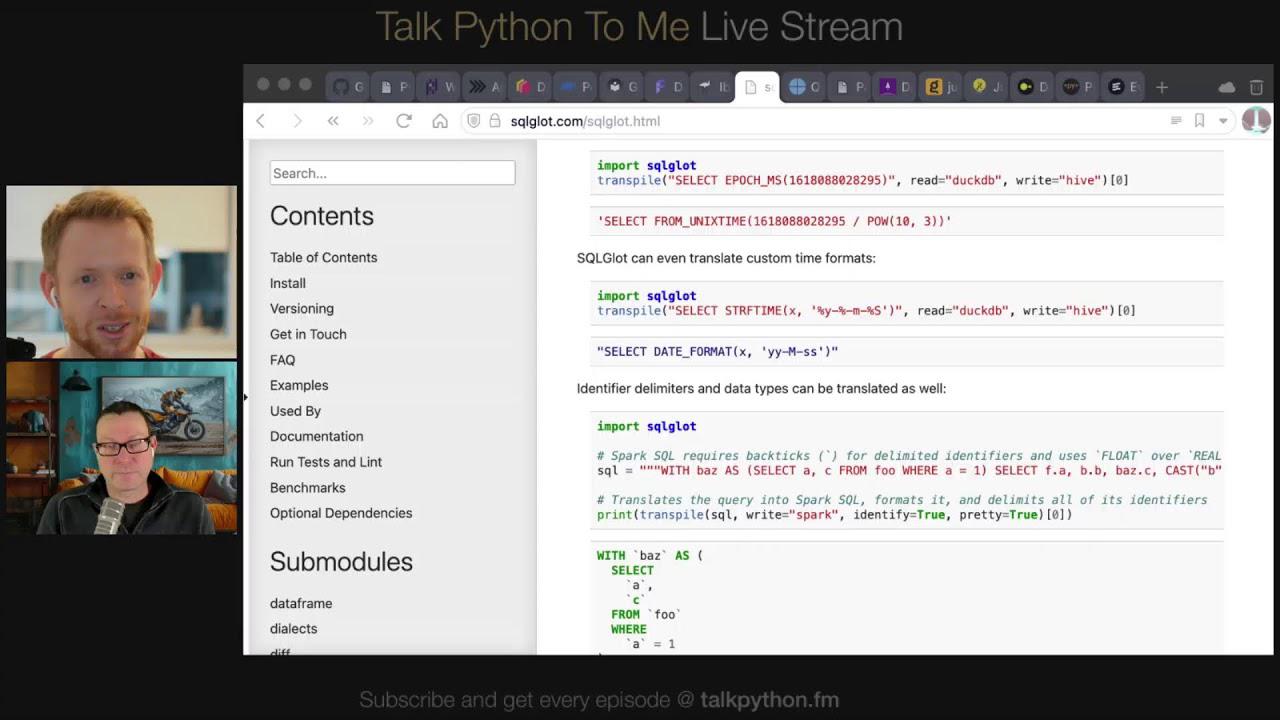

SQLGLOT

So, SQLGLOT project started by Toby Mao. So, he's a Netflix alum and, you know, really talented developer who's created this SQL query transpilation framework library for Python and, you know, kind of underlying core library. And so, the problem that's being solved there is that SQL, despite being a quote-unquote standard, is not at all standardized across different database systems.

And so, if you want to take your SQL queries written for one engine and use them someplace else, without something like SQLGLOT, you would have to manually rewrite and make sure you get the typecasting and coalescing rules correct. And so, SQLGLOT understands the intricacies and the quirks of every database dialect, SQL dialect, and knows how to correctly translate from one dialect to another.

And so, IBIS now uses SQLGLOT as its underlying engine for query transpilation and generating SQL outputs. So, originally, IBIS had its own kind of bad version of SQLGLOT, kind of a query transpilation, like SQL transpilation that was powered by, I think, powered by SQLAlchemy and a bunch of custom code. And so, I think they've been able to delete a lot in IBIS by moving to SQLGLOT.

So, and Toby, like, his company, Tobiko Data, they're building a product called SQL Mesh that's powered by SQLGLOT. So, very cool project and maybe a bit in the weeds, but if you've ever needed to convert a SQL query from one dialect to another, it's, yeah, SQLGLOT is here to save the day.

All right, Wes, I think we're getting short on time, but, you know, I know everybody appreciated hearing from you and hearing what you're up to these days. Anything you want to add before we wrap up?

I don't think so. Yeah. I enjoyed the conversation and yeah. Yeah, there's a lot of stuff, a lot of stuff going on and still plenty of things to get excited about. So I think often people feel like, you know, all the exciting problems in the Python ecosystem have been solved, but there's still a lot to do and yeah, we've made a lot of progress in the last, you know, 15 plus years, but, you know, in some ways it feels like we're just getting started. We are just getting started. Excited to see where things go next.